Table of contents |

|

|

This page is maintained by: KE Usage Statistics Work Group |

Date |

Version |

Owner |

Version history |

PDF |

|---|---|---|---|---|

2010-04-13 |

0.2 |

|

0.2 |

|

2010-03-25 |

0.1 |

Peter Verhaar |

0.1 |

|

Guidelines for the exchange of usage statistics from a repository to a central server using OAI-PMH and OpenURL Context Objects.

The abstract describes what the application profile is about. It should contain a problem definition, the standards described by the application profile and the goal of the application profile.

From here, content can be added. Remember to start chapters with {anchor:Chaptername\} and include \[#Chaptername\] in the Table of contents.

XML may be added between {code:xml\|collapse=true\|linenumbers=true\} {code\} (remove the \ to use tags) |

The impact or the quality of academic publications is traditionally measured by considering the number of times the text is cited. Nevertheless, the existing system for citation-based metrics has frequently been the target of serious criticism. Citation data provided by ISI focus on published journal articles only, and other forms of academic output, such as dissertations or monographs are mostly neglected. In addition, it normally takes a long time before citation data can become available, because of publication lags. As a result of this growing dissatisfaction with citation-based metrics, a number of research projects have begun to explore alternative methods for the measurement of academic impact. Many of these initiatives have based their findings on usage data. An important advantage of download statistics is that they can readily be applied to all electronic resources, regardless of their contents. Whereas citation analyses only reveal usage by authors of journal articles, usage data can in theory be produced by any user. An additional benefit of measuring impact via the number of downloads is the fact that usage data can become available directly after the document has been placed on-line.

Virtually all web servers that provide access to electronic resources record usage events as part of their log files. Such files usually provide detailed information on the documents that have been requested, on the users that have initiated these requests, and on the moments at which these requests took place. One important difficulty is the fact that these logs are usually structured according to a proprietary format. Before usage data from different institutions can be compared in a meaningful and consistent way, the log entries need to be standardised and normalised. Various projects have investigated how such data harmonisation can take place. In the MESUR project, usage data have been standardised by serialising the information from log files as XML files structured according to the OpenURL Context Objects schema (Bollen and Van de Sompel, 2006). This same standard is recommended in the JISC Usage Statistics Final Report. Using this metadata standard, it becomes possible to set up an infrastructure in which usage data are aggregated within a network of distributed repositories. The PIRUS-I project (Publishers and Institutional Repository Usage Statistics), which was funded by JISC, has investigated how such exchange of usage data can take place. An important outcome of this project was a range of scenarios for the "creation, recording and consolidation of individual article usage statistics that will cover the majority of current repository installations" .

"Developing a global standard to enable the recording, reporting and consolidation of online usage statistics for individual journal articles hosted by institutional repositories, publishers and other entities (Final Report)", p.3. < http://www.jisc.ac.uk/media/documents/programmes/pals3/pirus_finalreport.pdf > |

Whereas these three projects all make use of the OpenURL Context Object standard, some subtle differences have emerged in the way in which this standard is actually used. Nevertheless, it is important to ensure that statistics are produced in exactly the same manner, since, otherwise, it would be impossible to compare metrics produced by different projects. With the support of Knowledge Exchange, a collaborative initiative for leading national organisations in Europe, an initiative was begun to align the technical specifications of these various projects. This document is a first proposal for international guidelines for the accumulation and the exchange of usage data. The proposal is based on a careful comparison of the technical specifications that have been developed by these three projects.

A usage event takes place when a user downloads a document which is managed in a repository, or when a user views the metadata that is associated with this document. The user may have arrived at this document through the mediation of a referrer. This is typically a search engine. Alternatively, the request may have been mediated by a link resolver. The usage event in turn generates usage data.

The institution that is responsible for the repository that contains the requested document is referred to as a usage data provider. Data can be stored locally in a variety of formats. Nevertheless, to allow for a meaningful central collection of data, usage data providers must be able to expose the data in a standardised data format, so that they can be harvested and transferred to a central database. The institution that manages the central database is referred to as the usage data aggregator. The data must be transferred using a well-defined transfer protocol. Ultimately, certain services can be built on the basis of the data that have been accumulated.

In the PIRUS project there were three strategies identified in order to exchange usage statistics.

Usage Statistics Exchange Strategy B will be the used. In this strategy usage events will be stored on the local repository, and will be harvested on request by a central server. |

In particular this means that normalisation does not have to be done by repositories, which will make the implementation and acceptation easier.

The normalisation will be done in two locations. One at the repository site, and one at the central service.

At the repository the usage events will be filtered to a commonly agreed robot list in order to reduce the data traffic.At the central service COUNTER rules are applied to provide comparable statisitics to other services (such as counter reporting service, google data visualisations etc.) |

The following projects and parties will endorse these guidelines, by applying these in their implementation.

project |

status |

|---|---|

SURFsure |

|

PIRUS-II |

|

OA-Statistics |

|

NEEO |

|

COUNTER |

|

..publisher.. Plos? |

|

The filter is implemented at the repository and is meant for reducing traffic by 80%. This list is basic and simple, and is not meant to filter out false-positives.

We agreed to filter usage events at the repository before sending them to a central server. The filter wil be based on "internet robots" according to the definition below. A basic list is used by all repositories to make the filtering comparable. |

The "user" as defined in section 2 of this report is assumed to be a human user. Consequently, the focus of this document is on requests which have consciously been initiated by human beings. Automated visits by internet robots must be filtered from the data as much as possible. Internet robots can be identified by comparing the value of the User Agent HTTP header to values which are present in a list of known robots which is managed by a central authority. All institutions that send usage data must first check the entry against this list of internet robots. If the robot is in the list, the event should not be sent. \[This is an issue that still needs to be addressed. Should robot filtering take place on the basis of robot names or on the basis of IP addresses?\] |

We agreed to filter based on the robot name, with regular expressions |

A small note about behaviour analysis with heuristics:

Heuristics analysis should folow according to a transparent algorithm, and is NOT done by the repositories. Heuristics might be done at the central server in a later phase then Usage statistics has matured more. Centralised heuristics will reduce the the debate on reliability.

Heuristics are out of the scope for these guidelines at the moment |

This section tells us: "when do we classify a webagent as a robot?"

If a single user clicks repeatedly on the same document within a given amount of time, this should be counted as a single request. This measure is needed to minimise the impact of conscious falsification by authors. There appears to be some difference as regards the time-frame of double clicks. The table below provides an overview of the various timeframes that have been suggested.

COUNTER |

10 seconds |

LogEC |

1 month |

AWStats |

1 hour |

IFABC |

30 minutes |

Individual usage data providers should not filter doubles clicks. This form of normalisation should be carried out on a central level by the aggregator.

The data format is in OpenURL context objects. In the KE Usage Statistics Guidelines we make a distinction between the CORE set and EXTENSIONS.

The CORE set is a requirement to setup a reliable Statistics service.

the Extensions are extra, and are more likely specific for projects and their user inter face and their specific user demands. These extensions are optional to implemet, but are shown here to infor the statistics community what information they might miss or not able to distinguish on the long run.

To be able to compare usage data from different repositories, the data needs to be available in a uniform format. An agreement need to be reached, firstly, on which aspects of the usage event need to be recorded. In addition, guidelines need to be developed for the format in which this information can be expressed. Following recommendations from MESUR and the JISC Usage Statistics Project, it will be stipulated that usage events need to be serialized in XML using the data format that is specified in the OpenURL Context Objects schema. The XML Schema for XML Context Objects can be accessed at http://www.openurl.info/registry/docs/info:ofi/fmt:xml:xsd:ctxThe OpenURL Framework Initiative recommends that each community that adopts the OpenURL Context Objects Schema should define its application profile. Such a profile must consist of specifications for the use of namespaces, character encodings, serialisation, constraint languages, formats, metadata formats, and transport protocols. This section will attempt to provide such a profile, based on the experiences from the projects NEEO, SURE and OA-Statistics.

The root element of the XML-document must be <context-objects>. It must contain a reference to the official schema and declare three namespaces:

OpenURL Context Objects |

info:ofi/fmt:xml:xsd:ctx |

Dublin Core Metadata Initiative |

|

DINI Requester info |

Each usage event must be described in a separate <context-object> element. All individual <context-object> elements must be wrapped into the <context-objects> root element.

Each <context-object> must have a timestamp attribute, and it may optionally be given an identifier attribute. These two attributes can be used to record the request time and an identification of the usage event. Details are provided below.

Description |

The exact time on which the usage event took place. |

XPath |

ctx:context-object/@timestamp |

Usage |

Mandatory |

Format |

The format of the request time must conform to ISO8601. The YYYY-MM-DDTHH:MM:SSZ representation must be used. |

Example |

2009-07-29T08:15:46+01:00 |

Description |

Unique identification of the usage event. |

XPath |

ctx:context-object/@identifier |

Usage |

Optional |

Format |

The identifier will be formed by combining the OAI-PMH identifier, the date and a three-letter code for the institution. Next, this identifier will be encrypted using MD5, so that the identifier becomes a 32-digit number (hexadecimal notation). |

Example |

b06c0444f37249a0a8f748d3b823ef2a |

Within a <context-object> element, the following subelements can be used:

Element name |

minOccurs |

maxOccurs |

Referent |

1 |

1 |

ReferringEntity |

0 |

1 |

Requester |

1 |

1 |

ServiceType |

1 |

1 |

Resolver |

1 |

1 |

Referrer |

0 |

1 |

The <referent> element must provide information on the document that is requested. More specifically, it must record the following data elements.

Description |

The URL of the object file or the metadata record that is requested. In the case of a request for an object file, the URL must be connected to the object's URN by the aggregator, so that the associated metadata can be obtained. |

XPath |

ctx:context-object/ctx:referent/?ctx:identifier |

Usage |

Mandatory |

Format |

URL. |

Example |

https://openaccess.leidenuniv.nl/bitstream/1887/12100/1/Thesis.pdf |

|

|

Description |

A globally unique identification of the resource that is requested must be provided. |

XPath |

ctx:context-object/ctx:referent/? ctx:identifier |

Usage |

Mandatory if applicable |

Format |

URN |

Example |

The <ReferringEntity> provides information ion the environment that has forwarded the user to the document that was requested. This referrer can be identified in two ways.

Description |

The entity which has directed the user to the requested resource. As a minimal requirement, this must be the URL provided by the HTTP referrer string. |

XPath |

ctx:referring-entity/ctx:identifier |

Usage |

Mandatory if applicable |

Format |

URL |

Example |

http://www.google.nl/search?hl=nl&q=beleidsregels+artikel+4%3A84&meta=" |

Description |

The referrer may be categorised on the basis of a limited list of known referrers. |

XPath |

ctx:referring-entity/ctx:identifier |

Usage |

Optional |

Format |

The following values are allowed: "google", "google scholar", "bing", "yahoo", "altavista" |

Example |

The user who has sent the request for the file is identified in the <Requester> element.

Description |

The user can be identified by providing the IP-address. Including the full IP-address in the description of a usage event is not permitted by international copyright laws. For this reason, the IP-address needs to be encrypted. The IP-address must be hashed using MD5 encryption. |

XPath |

ctx:context-object/ctx:requester/?ctx:identifier |

Usage |

Mandatory |

Format |

32 hexadecimal numbers. |

Example |

c06f0464f37249a0a9f848d4b823ef2a |

Description |

When the IP-address is encrypted, this will have the disadvantage that information on the geographic location, for instance, can no longer be derived. For this reason, the C-Class subnet must be provided. The C-Class subnet, which consists of the three most significant bytes from the IP-address, is used to designate the network ID. The final (most significant) byte, which designates the HOST ID is, replaced with a '0'. |

XPath |

ctx:context-object/ctx:requester/?ctx:identifier |

Usage |

Mandatory |

Format |

Three hexadecimal numbers separated by a dot, followed by a dot and a '0'. |

Example |

118.94.150.0 |

Description |

The country from which the request originated must also be provided explicitly. |

XPath |

ctx:context-object/ctx:requester/?ctx:metadata-by-val/ctx:metadata/?dcterms:spatial |

Usage |

Optional |

Format |

A two-letter code in lower case, following the ISO 3166-1-alpha-2 standard. http://www.iso.org/iso/english_country_names_and_code_elements |

Example |

ne |

Description |

The user may be categorised, using a list of descriptive terms. If no classification is possible, it must be omitted. |

XPath |

ctx:context-object/ctx:requester/?ctx:metadata/dini:requesterinfo/?dini:classificationIf this element is used, the <metadata> element must be preceded by |

Usage |

Optional |

Format |

Three values are allowed:

|

Example |

institutional |

Description |

The identifier of the complete usage session of a given user. |

XPath |

ctx:context-object/ctx:requester/?ctx:metadata/dini:requesterinfo/?dini:classification/ dini:hashed-sessionIf this element is used, the <metadata> element must be preceded by |

Usage |

Optional |

Format |

If the session ID is a hash itself, it must be hashed. Otherwise, provide a hash of the session ID. |

Example |

660b14056f5346d0 |

Description |

The full HTTP user agent string |

XPath |

ctx:context-object/ctx:requester/?ctx:metadata/dini:requesterinfo/?dini:classification/dini:user-agentIf this element is used, the <metadata> element must be preceded by |

Usage |

Optional |

Format |

String |

Example |

Mozilla/5.0 (Windows; U; Windows NT 5.1; en-US; rv:1.9.0.6) Gecko/2009011913 Firefox/3.0.6 (.NET CLR 3.5.30729) |

Description |

The request type specifies if the request is for an object file or a metadata record. |

XPath |

ctx:context-object/ctx:service-type/?ctx:metadata-by-val/ctx:metadata/?dcterms:format |

Inclusion |

Mandatory |

Format |

Two values are allowed: "objectFile" or "metadataView" |

Example |

ObjectFile |

Description |

An identification of the institution that is responsible for the repository in which the requested document is stored. |

XPath |

ctx:context-object/ctx:resolver/? ctx:identifier |

Usage |

Mandatory |

Format |

URL of the institution's repository |

Example |

www.openaccess.leidenuniv.nl |

Description |

In the case of link resolver usage data, the URL of the OpenURL resolver must be provided. |

XPath |

ctx:context-object/ctx:resolver/?ctx:identifier |

Usage |

Optional |

Format |

URL |

Example |

|

Description |

The identifier of the context from within the user triggered the usage of the target resource. |

XPath |

ctx:context-object/ctx:referrer/?ctx:identifier |

Usage |

Optional |

Format |

URL |

Example |

|

place here the extensions

OpenAIRE might add additional data for for example "Projects".

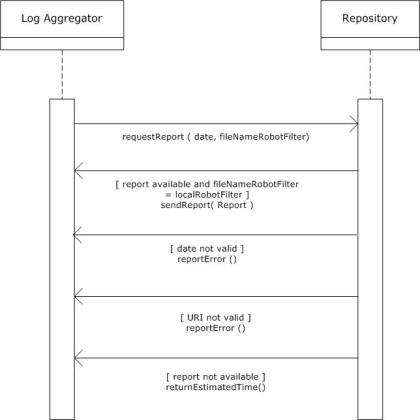

OAI-PMH is a relatively light-weight protocol which does not allow for a bidirectional traffic. If a more reliable error-handling is required, the Standardised Usage Statistics Harvesting Initiative (SUSHI) must be used. SUSHI http://www.niso.org/schemas/sushi/was developed by NISO (National Information Standards Organization) in cooperation with COUNTER. This document assumes that the communication between the aggregator and the usage data provider takes place as is explained in figure 1.

Figure 1.

The interaction commences when the log aggregator sends a request for a report about the daily usage of a certain repository. Two parameters must be sent as part of this request: (1) the date of the report and (2) the file name of the most recent robot filter. The filename that is mentioned in this request will be compared to the local filename. Four possible responses can be returned by the repository.

In SUSHI version 1.0., the following information must be sent along with each request:

This request will active a special tool that can inspect the server logging and that can return the requested data. These data are transferred as OpenURL Context Object log entries, as part of a SUSHI response.

The reponse must repeat all the information from the request, and provide the requested report as XML payload

The usage data are subsequently stored in a central database. External parties can obtain information about the contents of this central database through specially developed web services. The log harvester must ultimately expose these data in the form of COUNTER-compliant reports.

Listing 2 is an example of a SUSHI request, sent from the log aggregator to a repository.

<?xml version="1.0" encoding="UTF-8"?> <soap:Envelope xmlns:soap="http://schemas.xmlsoap.org/soap/envelope/" xmlns:xsi="http://www.w3.org/2001/XMLSchema-instance" xsi:schemaLocation="http://schemas.xmlsoap.org/soap/envelope/ http://schemas.xmlsoap.org/soap/envelope/"> <soap:Body> <ReportRequest xmlns:ctr="http://www.niso.org/schemas/sushi/counter" xsi:schemaLocation="http://www.niso.org/schemas/sushi/counter http://www.niso.org/schemas/sushi/counter_sushi3_0.xsd" xmlns="http://www.niso.org/schemas/sushi" xmlns:xsi="http://www.w3.org/2001/XMLSchema-instance"> <Requestor> <ID>www.logaggregator.nl</ID> <Name>Log Aggregator</Name> <Email>logaggregator@surf.nl</Email> </Requestor> <CustomerReference> <ID>www.leiden.edu</ID> <Name>Leiden University</Name> </CustomerReference> <ReportDefinition Release="urn:robots-v1.xml" Name="Daily Report v1"> <Filters> <UsageDateRange> <Begin>2009-12-21</Begin> <End>2009-12-22</End> </UsageDateRange> </Filters> </ReportDefinition> </ReportRequest> </soap:Body> </soap:Envelope> |

Note that the intent of the SUSHI request above is to see all the usage events that have occurred on 21 December 2009. The SUSHI schema was originally developed for the exhchange of COUNTER-compliant reports. In the documentation of the SUSHI XML schema, it is explained that COUNTER usage is only reported at the month level. In SURE, only daily reports can be provided. Therefore, it will be assumed that the implied time on the date that is mentioned is 0:00. The request in the example that is given thus involves all the usage events that have occurred in between 2009-12-21T00:00:00 and 2002-12-22T00:00:00.

As explained previously, the repository can respond in four different ways. If the parameters of the request are valid, and if the requested report is available, the OpenURL ContextObjects will be sent immediately. The Open URL Context Objects will be wrapped into element <Report>, as can be seen in listing 3.

<?xml version="1.0" encoding="UTF-8"?> <soap:Envelope xmlns:soap="http://schemas.xmlsoap.org/soap/envelope/" xmlns:xsi="http://www.w3.org/2001/XMLSchema-instance" xsi:schemaLocation="http://schemas.xmlsoap.org/soap/envelope/ http://schemas.xmlsoap.org/soap/envelope/"> <soap:Body> <ReportResponse xmlns:ctr="http://www.niso.org/schemas/sushi/counter" xsi:schemaLocation="http://www.niso.org/schemas/sushi/counter http://www.niso.org/schemas/sushi/counter_sushi3_0.xsd" xmlns="http://www.niso.org/schemas/sushi" xmlns:xsi="http://www.w3.org/2001/XMLSchema-instance"> <Requestor> <ID>www.logaggregator.nl</ID> <Name>Log Aggregator</Name> <Email>logaggregator@surf.nl</Email> </Requestor> <CustomerReference> <ID>www.leiden.edu</ID> <Name>Leiden University</Name> </CustomerReference> <ReportDefinition Release="urn:DRv1" Name="Daily Report v1"> <Filters> <UsageDateRange> <Begin>2009-12-22</Begin> <End>2009-12-23</End> </UsageDateRange> </Filters> </ReportDefinition> <Report> <ctx:context-objects xmlns:xsi="http://www.w3.org/2001/XMLSchema-instance" xmlns:dcterms="http://dublincore.org/documents/2008/01/14/dcmi-terms/" xmlns:ctx="info:ofi/fmt:xml:xsd:ctx"> <ctx:context-object timestamp="2009-11\- 09T05:56:18+01:00"> ... </ctx:context-object> </ctx:context-objects> </Report> </ReportResponse> </soap:Body> </soap:Envelope> |

If the begin date and the end date in the request of the log aggregator form a period that exceeds one day, an error message must be sent. In the SUSHI schema, such messages may be sent in an <Exception> element. Three types of errors can be distinguished. Each error type is given its own number. An human-readable error message is provided under <Message>.

<?xml version="1.0" encoding="UTF-8"?> <soap:Envelope xmlns:soap="http://schemas.xmlsoap.org/soap/envelope/" xmlns:xsi="http://www.w3.org/2001/XMLSchema-instance" xsi:schemaLocation="http://schemas.xmlsoap.org/soap/envelope/ http://schemas.xmlsoap.org/soap/envelope/"> <soap:Body> <ReportResponse xmlns:ctr="http://www.niso.org/schemas/sushi/counter" xsi:schemaLocation="http://www.niso.org/schemas/sushi/counter http://www.niso.org/schemas/sushi/counter_sushi3_0.xsd" xmlns="http://www.niso.org/schemas/sushi" xmlns:xsi="http://www.w3.org/2001/XMLSchema-instance"> <Requestor> <ID>www.logaggregator.nl</ID> <Name>Log Aggregator</Name> <Email>logaggregator@surf.nl</Email> </Requestor> <CustomerReference> <ID>www.leiden.edu</ID> <Name>Leiden University</Name> </CustomerReference> <ReportDefinition Release="urn:DRv1" Name="Daily Report v1"> <Filters> <UsageDateRange> <Begin>2009-12-22</Begin> <End>2009-12-23</End> </UsageDateRange> </Filters> </ReportDefinition> <Exception> <Number>1</Number> <Message>The range of dates that was provided is not valid. Only daily reports are available.</Message> </Exception> </ReportResponse> </soap:Body> </soap:Envelope> |

A second type of error may be caused by the fact that the file that is mentioned in the request can not be accessed. In this situation, the response will look as follows:

<?xml version="1.0" encoding="UTF-8"?> <soap:Envelope xmlns:soap="http://schemas.xmlsoap.org/soap/envelope/" xmlns:xsi="http://www.w3.org/2001/XMLSchema-instance" xsi:schemaLocation="http://schemas.xmlsoap.org/soap/envelope/ http://schemas.xmlsoap.org/soap/envelope/"> <soap:Body> <ReportResponse xmlns:ctr="http://www.niso.org/schemas/sushi/counter" xsi:schemaLocation="http://www.niso.org/schemas/sushi/counter http://www.niso.org/schemas/sushi/counter_sushi3_0.xsd" xmlns="http://www.niso.org/schemas/sushi" xmlns:xsi="http://www.w3.org/2001/XMLSchema-instance"> <Requestor> <ID>www.logaggregator.nl</ID> <Name>Log Aggregator</Name> <Email>logaggregator@surf.nl</Email> </Requestor> <CustomerReference> <ID>www.leiden.edu</ID> <Name>Leiden University</Name> </CustomerReference> <ReportDefinition Release="urn:DRv1" Name="Daily Report v1"> <Filters> <UsageDateRange> <Begin>2009-12-22</Begin> <End>2009-12-23</End> </UsageDateRange> </Filters> </ReportDefinition> <Exception> <Number>2</Number> <Message>The file describing the internet robots is not accessible.</Message> </Exception> </ReportResponse> </soap:Body> </soap:Envelope> |

When the repository is in the course of producing the requested report, a response will be sent that is very similar to listing 6. The estimated time of completion will be provided in the <Data> element. According to the documentation of the SUSHI XML schema, this element may be used for any other optional data.

<?xml version="1.0" encoding="UTF-8"?> <soap:Envelope xmlns:soap="http://schemas.xmlsoap.org/soap/envelope/" xmlns:xsi="http://www.w3.org/2001/XMLSchema-instance" xsi:schemaLocation="http://schemas.xmlsoap.org/soap/envelope/ http://schemas.xmlsoap.org/soap/envelope/"> <soap:Body> <ReportResponse xmlns:ctr="http://www.niso.org/schemas/sushi/counter" xsi:schemaLocation="http://www.niso.org/schemas/sushi/counter http://www.niso.org/schemas/sushi/counter_sushi3_0.xsd" xmlns="http://www.niso.org/schemas/sushi" xmlns:xsi="http://www.w3.org/2001/XMLSchema-instance"> <Requestor> <ID>www.logaggregator.nl</ID> <Name>Log Aggregator</Name> <Email>logaggregator@surf.nl</Email> </Requestor> <CustomerReference> <ID>www.leiden.edu</ID> <Name>Leiden University</Name> </CustomerReference> <ReportDefinition Release="urn:DRv1" Name="Daily Report v1"> <Filters> <UsageDateRange> <Begin>2009-12-22</Begin> <End>2009-12-23</End> </UsageDateRange> </Filters> </ReportDefinition> <Exception> <Number>3</Number> <Message>The report is not yet available. The estimated time of completion is provided under "Data".</Message> <Data>2010-01-08T12:13:00+01:00</Data> </Exception> </ReportResponse> </soap:Body> </soap:Envelope> |

Error numbers and the corresponding Error messages are also provided in the table below.

Error number |

Error message |

1 |

The range of dates that was provided is not valid. Only daily reports are available. |

2 |

The file describing the internet robots is not accessible |

3 |

The report is not yet available. The estimated time of completion is provided under "Data" |

<referrent> |

|

SURE |

Two identifiers for the requested document must given in separate <identifier> elements, namely the OAI-PMH identifier and the URL of the document. |

OA-S |

The <identifier> element contains an identifier of that resource and is repeated for every known identifier of the resource. This should also include identifiers of more abstract resources which the accessed resource is a part of, e. g. a journal identifier. In order to facilitate compatibility with the German OA Network project, the OAI-PMH identifier of the resource's metadata (as issued by the repository's metadata OAI-PMH data provider) shall also be included. All identifiers must be given in URI format. |

NEEO |

Two <ctx:identifier> descriptorsmust be present: (1) Identifier of the object file downloaded (must correspond to the value of the "ref" attribute in the NEEO-DIDL descriptor for the objectFile) and (2) OAI identifier of the publication (of which the specific object file is downloaded) |

<referring-entity> |

|

SURE |

The URL that was received from the referrer and the classification of the search engine, if it was used, must be given in separate <identifier> elements. |

OA-S |

If available, a HTTP referrer has to be included in the ContextObject's <referring-entity>element. This indicates the entity which was directing to the used resource at the time of the usage event (if it was not forged). As a minimal requirement, this would be the URL provided by the HTTP referrer string. Additionally all known other identifiers for that resource may also be specified. |

NEEO |

URL received from referrer |

<requester> |

|

SURE |

The <requester>, the agent who has requested the <refererent> must be identified by providing the C-class Subnet, and the encrypted IP-address must both be given in separate <identifier>s. In addition, the name of the country where the request was initiated must be provided. The <metadata-by-val> element must be used for this purpose. The country must be given in <dcterms:spatial>. The dcterms namespace must be declared in the <format> element as well. |

OA-S |

The <requester> element holds information about the agent that generated the usage event, basically identifying the user that triggered the event. This includes the IP address (in a hashed form for privacy reasons), Class-C network address (also hashed), host name (reduced to only first and second level domain name, also for privacy reasons), a classification of the agent, a session ID and User Agent string. |

NEEO |

If this entity is used in a NEEO-SWUP ContextObject, then at least one <ctx:identifier> must be present, holding the MD5-encryption of the IP address of the browser from which the usage event occurs. |

<service-type> |

|

SURE |

The DC metadata term "type" is used to clarify whether the usage event involved a download of a object file or a metadata view. Choice between 'objectFile' and 'metadataView'. |

OA-S |

The <service-type> element classifies the used resource. This is based on metadata in the format specified by the „info:ofi/fmt:xml:xsd:sch_svc" scheme. This catalogue of classifications may be extended in a later stage of the OA Statistics project. The method of expressing this classification is prescribed by the ContextObject XML schema. |

NEEO |

The EO Gateway will consider all incoming NEEO-SWUP ContextObjects as representations for download events. This entity, if present, is ignored by the EO Gateway. |

<referrer> |

|

SURE |

This element is not used |

OA-S |

This element is not used |

NEEO |

If this entity is used in a NEEO-SWUP ContextObject, then at least one <ctx:identifier> must be present, holding a string that corresponds to the User-Agent header, which is transmitted in the HTTP transaction that generates the download. |

<resolver> |

|

SURE |

An <identifier> for the institution that provided access to the downloaded document must be given within <resolver>. |

OA-S |

This additional information may be optionally specified and is only sensible for link resolver usage data (as opposed to web servers or repositories). The <resolver> element specifies the URL of the OpenURL resolver itself. The <referrer> element specifies the identifier of the context from within the user triggered the usage of the target resource which is given via the <referent> element, and which was itself referenced by the <referring-entity> element (see above). |

NEEO |

Exactly 1 <ctx:identifier> descriptor must be present, holding the OAI baseURL for the repository that generates this ContextObject. This OAI baseURL must correspond exactly to the one given in the Admin file of the corresponding NEEO partner institution. |

The robot filter list is placed in a yet to be determined web location where services can easily find and use this list.

An internet robot is defined according to the definitions on this page.

the table below represents the robot list with descriptions as determined today (2010-05-06), this list can be changed according to the knowledge of the Working group.

PloS |

COUNTER |

NEEO |

AWstats |

Description |

||

<ac:structured-macro ac:name="unmigrated-wiki-markup" ac:schema-version="1" ac:macro-id="9194871b-661c-4bcc-8365-805a604491e9"><ac:plain-text-body><![CDATA[ |

[^a]fish |

|

|

|

|

]]></ac:plain-text-body></ac:structured-macro> |

<ac:structured-macro ac:name="unmigrated-wiki-markup" ac:schema-version="1" ac:macro-id="a4341747-e9e5-44c8-b447-065ba85b6a3c"><ac:plain-text-body><![CDATA[ |

[+:,\.\;\/ |

|

|

|

|

|

acme\.spider |

|

|

|

|

||

alexa |

|

|

|

|

||

Alexandria(\s|)prototype(\s|)project |

Alexandria prototype project |

|

|

|

||

AllenTrack |

|

|

|

|

||

almaden |

|

|

|

|

||

appie |

|

|

|

|

||

Arachmo |

Arachmo |

|

|

|

||

archive\.org_bot |

|

|

|

|

||

arks |

|

|

|

|

||

asterias |

|

|

|

|

||

atomz |

|

|

|

|

||

autoemailspider |

|

|

|

|

||

awbot |

|

|

|

|

||

baiduspider |

|

|

|

|

||

bbot |

|

|

|

|

||

biadu |

|

|

|

|

||

biglotron |

|

|

|

|

||

bloglines |

|

|

|

|

||

blogpulse |

|

|

|

|

||

boitho\.com-dc |

|

|

|

|

||

bookmark-manager |

|

|

|

|

||

<ac:structured-macro ac:name="unmigrated-wiki-markup" ac:schema-version="1" ac:macro-id="fc043858-1503-47a8-9f08-ed9f303361df"><ac:plain-text-body><![CDATA[ |

bot[+:,\.\;\/ |

|

|

|

|

|

Brutus\/AET |

Brutus/AET |

|

|

|

||

bspider |

|

|

|

|

||

bwh3_user_agent |

|

|

|

|

||

cfnetwork| checkbot |

|

|

|

|

||

China\sLocal\sBrowse\s2\.6 |

|

|

|

|

||

|

Code Sample Web Client |

|

|

|

||

combine |

|

|

|

|

||

commons-httpclient |

|

|

|

|

||

ContentSmartz |

|

|

|

|

||

core |

|

|

|

|

||

crawl |

|

|

|

|

||

cursor |

|

|

|

|

||

custo |

|

|

|

|

||

DataCha0s\/2\.0 |

|

|

|

|

||

Demo\sBot |

|

|

|

|

||

docomo |

|

|

|

|

||

DSurf |

|

|

|

|

||

dtSearchSpider |

dtSearchSpider |

|

|

|

||

dumbot |

|

|

|

|

||

easydl |

|

|

|

|

||

EmailSiphon |

|

|

|

|

||

EmailWolf |

|

|

|

|

||

exabot |

|

|

|

|

||

fast-webcrawler |

|

|

|

|

||

favorg |

|

|

|

|

||

FDM(\s|+)1 |

FDM 1 |

|

|

|

||

feedburner |

|

|

|

|

||

feedfetcher-google |

|

|

|

|

||

Fetch(\s|)API(\s|)Request |

Fetch API Request |

|

|

|

||

findlinks |

|

|

|

|

||

gaisbot |

|

|

|

|

||

GetRight |

GetRight |

|

|

|

||

geturl |

|

|

|

|

||

gigabot |

|

|

|

|

||

girafabot |

|

|

|

|

||

gnodspider |

|

|

|

|

||

Goldfire(\s|+)Server |

Goldfire Server |

|

|

|

||

Googlebot |

Googlebot |

|

|

|

||

grub |

|

|

|

|

||

heritrix |

|

|

|

|

||

hl_ftien_spider |

|

|

|

|

||

holmes |

|

|

|

|

||

htdig |

|

|

|

|

||

htmlparser |

|

|

|

|

||

httpget-5\.2\.2 |

httpget-5.2.2 |

|

|

|

||

httrack |

|

|

|

|

||

HTTrack |

HTTrack |

|

|

|

||

ia_archiver |

|

|

|

|

||

ichiro |

|

|

|

|

||

iktomi |

|

|

|

|

||

ilse |

|

|

|

|

||

internetseer |

|

|

|

|

||

iSiloX |

iSiloX |

|

|

|

||

java |

|

|

|

|

||

jeeves |

|

|

|

|

||

jobo |

|

|

|

|

||

larbin |

|

|

|

|

||

libwww-perl |

libwww-perl |

|

|

|

||

linkbot |

|

|

|

|

||

linkchecker |

|

|

|

|

||

linkscan |

|

|

|

|

||

linkwalker |

|

|

|

|

||

livejournal\.com |

|

|

|

|

||

lmspider |

|

|

|

|

||

LOCKSS |

|

|

|

|

||

LWP\:\:Simple |

LWP::Simple |

|

|

|

||

lwp-request |

|

|

|

|

||

lwp-tivial |

|

|

|

|

||

lwp-trivial |

lwp-trivial |

|

|

|

||

lycos |

|

|

|

|

||

mediapartners-google |

|

|

|

|

||

megite |

|

|

|

|

||

Microsoft(\s|)URL(\s|)Control |

Microsoft URL Control |

|

|

|

||

milbot |

Milbot |

|

|

|

||

mj12bot |

|

|

|

|

||

mnogosearch |

|

|

|

|

||

mojeekbot |

|

|

|

|

||

momspider |

|

|

|

|

||

motor |

|

|

|

|

||

msiecrawler |

|

|

|

|

||

msnbot |

|

|

|

|

||

|

MSNBot |

|

|

|

||

MuscatFerre |

|

|

|

|

||

myweb |

|

|

|

|

||

NABOT |

|

|

|

|

||

nagios |

|

|

|

|

||

NaverBot |

NaverBot |

|

|

|

||

netcraft |

|

|

|

|

||

netluchs |

|

|

|

|

||

ng\/2\. |

|

|

|

|

||

no_user_agent |

|

|

|

|

||

nutch |

|

|

|

|

||

ocelli |

|

|

|

|

||

Offline(\s|+)Navigator |

Offline Navigator |

|

|

|

||

OurBrowser |

|

|

|

|

||

perman |

|

|

|

|

||

pioneer |

|

|

|

|

||

playmusic\.com |

|

|

|

|

||

|

playstarmusic.com |

|

|

|

||

powermarks |

|

|

|

|

||

psbot |

|

|

|

|

||

python |

|

|

|

|

||

|

Python-urllib |

|

|

|

||

qihoobot |

|

|

|

|

||

rambler |

|

|

|

|

||

Readpaper |

Readpaper |

|

|

|

||

redalert| robozilla |

|

|

|

|

||

robot |

|

|

|

|

||

scan4mail |

|

|

|

|

||

scooter |

|

|

|

|

||

seekbot |

|

|

|

|

||

seznambot |

|

|

|

|

||

shoutcast |

|

|

|

|

||

slurp |

|

|

|

|

||

sogou |

|

|

|

|

||

speedy |

|

|

|

|

||

spider |

|

|

|

|

||

spider |

|

|

|

|

||

spiderman |

|

|

|

|

||

spiderview |

|

|

|

|

||

Strider |

Strider |

|

|

|

||

sunrise |

|

|

|

|

||

superbot |

|

|

|

|

||

surveybot |

|

|

|

|

||

T-H-U-N-D-E-R-S-T-O-N-E |

T-H-U-N-D-E-R-S-T-O-N-E |

|

|

|

||

tailrank |

|

|

|

|

||

technoratibot |

|

|

|

|

||

Teleport(\s|+)Pro |

Teleport Pro |

|

|

|

||

Teoma |

Teoma |

|

|

|

||

titan |

|

|

|

|

||

turnitinbot |

|

|

|

|

||

twiceler |

|

|

|

|

||

ucsd |

|

|

|

|

||

ultraseek |

|

|

|

|

||

urlaliasbuilder |

|

|

|

|

||

voila |

|

|

|

|

||

w3c-checklink |

|

|

|

|

||

Wanadoo |

|

|

|

|

||

Web(\s|+)Downloader |

Web Downloader |

|

|

|

||

WebCloner |

WebCloner |

|

|

|

||

webcollage |

|

|

|

|

||

WebCopier |

WebCopier |

|

|

|

||

Webinator |

|

|

|

|

||

Webmetrics |

|

|

|

|

||

webmirror |

|

|

|

|

||

WebReaper |

WebReaper |

|

|

|

||

WebStripper |

WebStripper |

|

|

|

||

WebZIP |

WebZIP |

|

|

|

||

Wget |

Wget |

|

|

|

||

wordpress |

|

|

|

|

||

worm |

|

|

|

|

||

Xenu(\s|)Link(\s|)Sleuth |

Xenu Link Sleuth |

|

|

|

||

y!j |

|

|

|

|

||

yacy |

|

|

|

|

||

yahoo-mmcrawler |

|

|

|

|

||

yahoofeedseeker |

|

|

|

|

||

yahooseeker |

|

|

|

|

||

yandex |

|

|

|

|

||

yodaobot |

|

|

|

|

||

zealbot |

|

|

|

|

||

zeus |

|

|

|

|

||

zyborg |

|

|

|

|

The robotlist.txt might going to look like this

2010-05-06 [^a]fish [+:,\.\;\/\\-]bot acme\.spider alexa Alexandria(\s|\+)prototype(\s|\+)project AllenTrack almaden appie Arachmo archive\.org_bot arks asterias atomz autoemailspider awbot baiduspider bbot biadu biglotron bloglines blogpulse boitho\.com\-dc bookmark\-manager bot[+:,\.\;\/\\-] Brutus\/AET bspider bwh3_user_agent cfnetwork| checkbot China\sLocal\sBrowse\s2\.6 combine commons\-httpclient ContentSmartz core crawl cursor custo DataCha0s\/2\.0 Demo\sBot docomo DSurf dtSearchSpider dumbot easydl EmailSiphon EmailWolf exabot fast-webcrawler favorg FDM(\s|\+)1 feedburner feedfetcher\-google Fetch(\s|\+)API(\s|\+)Request findlinks gaisbot GetRight geturl gigabot girafabot gnodspider Goldfire(\s|\+)Server Googlebot grub heritrix hl_ftien_spider holmes htdig htmlparser httpget\-5\.2\.2 httrack HTTrack ia_archiver ichiro iktomi ilse internetseer iSiloX java jeeves jobo larbin libwww\-perl linkbot linkchecker linkscan linkwalker livejournal\.com lmspider LOCKSS LWP\:\:Simple lwp\-request lwp\-tivial lwp\-trivial lycos mediapartners\-google megite Microsoft(\s|\+)URL(\s|+)Control milbot mj12bot mnogosearch mojeekbot momspider motor msiecrawler msnbot MuscatFerre myweb NABOT nagios NaverBot netcraft netluchs ng\/2\. no_user_agent nutch ocelli Offline(\s|\+)Navigator OurBrowser perman pioneer playmusic\.com powermarks psbot python qihoobot rambler Readpaper redalert| robozilla robot scan4mail scooter seekbot seznambot shoutcast slurp sogou speedy spider spider spiderman spiderview Strider sunrise superbot surveybot T\-H\-U\-N\-D\-E\-R\-S\-T\-O\-N\-E tailrank technoratibot Teleport(\s|\+)Pro Teoma titan turnitinbot twiceler ucsd ultraseek urlaliasbuilder voila w3c\-checklink Wanadoo Web(\s|\+)Downloader WebCloner webcollage WebCopier Webinator Webmetrics webmirror WebReaper WebStripper WebZIP Wget wordpress worm Xenu(\s|\+)Link(\s|\+)Sleuth y!j yacy yahoo\-mmcrawler yahoofeedseeker yahooseeker yandex yodaobot zealbot zeus zyborg |

Or it might look like the proposed XML version of the Robot exclusion list

<?xml version="1.0" encoding="UTF-8" ?>

<exclusions version="1.0" datestamp="2010-04-10">

<robot-list source="COUNTER" version="R3" datestamp="2010-04-01">

<description>Human-friendly description/notes about the COUNTER exclusion list</description>

<useragent>String to match for COUNTER</useragent>

<useragent>Another string to match for COUNTER</useragent>

<useragent>Etc.</useragent>

</robot-list>

<robot-list source="AWStats" version="x" datestamp="2009-10-02">

<description>Human-friendly description/notes about the AWStats exclusion list</description>

<useragent>String to match for AWStats</useragent>

<useragent>Another string to match for AWStats</useragent>

<useragent>Etc.</useragent>

</robot-list>

<robot-list source="PLoS" version="y" datestamp="2010-03-11">

<description>Human-friendly description/notes about the PLoS exclusion list</description>

<useragent>String to match for PLoS</useragent>

<useragent>Another string to match for PLoS</useragent>

<useragent>Etc.</useragent>

</robot-list>

</exclusions>

|